· 14 min read

Building Tight Games With Game Metrics (Part 2)

Jens Peter

Jens Peter Jensen finished a MSc in Game Design in 2012 at ITU Copenhagen and has ever since been on a quest to better understand games and especially their players.

Two weeks ago we started “metricifying” Daniel Cook’s, Chief Creative Officer at SpryFox, blog post on “Building tight game systems of cause and effect”. This week we begin part two of this game-analytical journey. If you missed the first part, you can find it here.

6. Pacing

“Tighter: Short time lapses between cause and effect. When creating mouse over boxes like you find in Diablo, a common mistake is to add a delay between when the mouse is over the inventory item and when the hover dialog appears. If the delay is too short, the hover dialog pops up when the player doesn’t expect it. If the delay is too long, the dialog feels laggy and non-responsive. (In my experience, 200ms seems ideal. That’s right inside the perception gap where you’ve decided to do something, but your conscious mind hasn’t quite caught up)“

“Looser: Long time lapses between cause and effect. Too long and the player misses that there is an effect at all. Imagine an RPG where you have a switch and a timer. If you hit the switch, a door opens 60 seconds later. Surprisingly few people will figure out that the door is linked to the switch. On the other hand, early investment in industry in Alpha Centauri resulted in alien attacks deep in the end game. This created a richer system of interesting trade off for players to manipulate over a long time span.“

Corresponding Metric: Cause and effect per game session

Determining if a player understands the correlation between action and consequence is difficult to achieve through user research methods. Even if you ask the player directly, he or she might not be able to fully explain their view upon action and reaction to you. There has been and still is a lot of research conducted within the field of psychology on how humans understand the connection between cause and effect. It is a complicated field and therefore it translates into a difficult task for game developers as well, especially if there is a large delay between the moments when cause and effect take place.

As Cook writes, the general rule is: the shorter the time between cause and effect, the easier it is for users to understand their correlation. The longer the time between the two, the more likely it is for the user to fail to associate the two events, and the even more likely it is for them to associate the effect with another incorrect cause.

As with Strength of Feedback and Noise, using metrics to determine if the player understands something is a challenging task, but it can be done by using creative metrics and by comparing the results of different metrics. Casual games, for example, are characterized by short game sessions that stop and start at the user’s discretion. This means that if there are instances where cause and effect are far from each other, they could be in different game sessions hours apart. That would make it very difficult for the player to understand the connection between the two.

One way to track the tightness of the time between cause and effect (or “pacing”) could be by determining if cause and effect are in the same game session. That translates into identifying the cause and effect of in-game actions, and then setting up metrics to measure how much game time and real time there is between cause and effect. This does mean that the game designer has to be able to determine what the effects of long term game play are, either by prediction or through play testing. But when this is done, it would be possible to define the actual metrics.

This should apply only to instances where there is a long time between cause and effect, long meaning anything over 200ms. Yes, the human mind is easily distracted, so a long time in this instance is anything that is not instantaneous. If there are any cases where the cause and effect are in different game sessions, the game designer should take extra care in communicating the connection between cause and effect. Again, the longer the time between cause and effect, the more effort has to be put into the game design in order to explain the connection. If the metrics data from the “cause and effect” is then compared to retention data, it could help identify moments where the player has difficulty understanding long-term cause and effect relations.

“Pacing” is of course a much bigger issue in some games than in others. “Match three objects” games like Candy Crush Saga have mostly only an instantaneous effect, while multiplayer strategy games like Kingdoms of Camelot and social tap economy games like Hay Day have very long time between cause and effect. If a game developer wants to make a strategy game and aims to keep the player engaged for a long time, it is very important for them to be aware of cause and effects that can be weeks apart. For instance, in Kingdoms of Camelot it can be hard for players to understand why they are being farmed by stronger players as soon as the “new player protection” ends, and what the player could have done to avoid that.

7. Linearity

“Tighter: Linearly increasing variables are more predictable. Consider the general friendliness of throwing a sword in a straight line in Zelda versus catching an enemy with an arcing boomerang while moving.

Looser: Non-linearly increasing variables, less so. The Medusa heads in Castlevania pose a surprisingly difficult challenge to many players because tracking them breaks the typical expectation linear movement. Even something as commonplace as gravity throws most people off their game. After all, it took thousands of years before we figured out how to accurately land an artillery shell.”

Corresponding Metric: Difficulty measuring trough object movement

It seems that Cook here refers to both the player’s ability to predict an action and the ease of successfully complete that action. Which makes Linearity similar to the Tapping Existing Mental Models section in part 1. It basically means that players can easily predict where something is going if it goes in a straight line, and the more erratic something is moving the more difficult it is for the player to predict its movement. This is only relevant to games where the gameplay includes objects that move. In such cases, linearity is directly tied to game difficulty.

Therefore it is possible to set up a metric that looks at the movement patterns of objects in games in order to measure difficulty. In an action game like Frontline Commando: D-Day, the player depends on predicting the movements of the enemy units in order to be able to hit them. The controls are clumsy and it is difficult to aim and shoot at the same time, so the player’s main course of action is to maneuver the aim relative to where the target is going to appear, then wait and shoot. That means that the difficulty of the game depends on the player’s ability to predict enemy movement. A game metric could in this case be set up to measure how straight or erratic the enemies move, based on the x,y,z, coordinates. That would give the developer an index on how difficult the different levels are, and on how to tweak this difficulty.

Translating position data to meaningful metrics is more challenging in 3D games than in 2D games. In 3D it is more difficult to define a straight line and how player perceive movement and predict it, but it is still possible to set some parameters that track this.

8. Indirection

“Tighter: Primary effects where the cause is directly related to the effect. In Zelda again, the primary attack is highly direct. You press a button, the sword swings out and a nearby enemy is hit.”

“Looser: Secondary effects where the cause triggers a secondary (or tertiary) system that in turn triggers an effect. Simulations and AI’s are notorious for rapidly become indecipherable due to numerous levels of indirection. In a game of SimEarth, it was often possible to noodle with variables and have little idea what was actually happening. However, the immense indirection yields systems that people can play with for decades.”

Corresponding Metric: Code tracking

This is very similar to the concept of “Pacing” described earlier, in terms of how strong the connection is between cause and effect. And the metrics described in Pacing can also be used to measure the Indirection.

Another way to measure the strength of the connection between cause and effect is to measure how many agents affect the player action. Simulator games are a good example of games where effects do not originate directly from player actions. Simulators have a lot of different variables influencing a very long list of states, that then again influence other states. These kind of games can very easily become so complicated that not even the game designers can figure out the cause and effect relationships any longer.

It is possible to set up a metrics system that tracks what pieces of program code influence what game states, and that way make a map of causality. This method is useful if the program code has grown in complexity beyond what the programming team can keep track of. It is also possible to get a sense of causality by simply mapping out when each state, value and method is being called in the code. This strategy can also be used as a debugging tool for missing code.

9. Hidden information

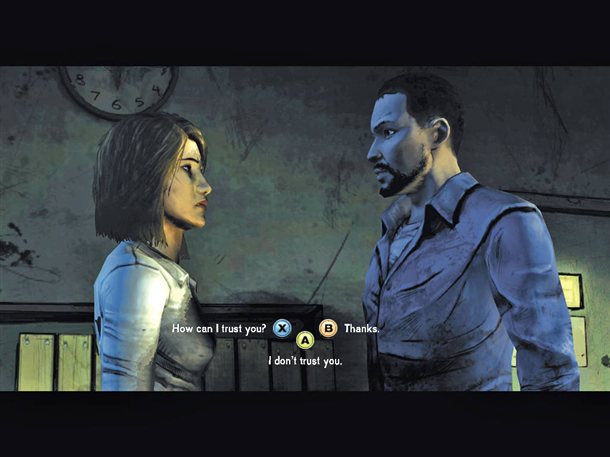

“Tighter: Visible sequences that are readily apparent. For example, in Triple Town we signal that a current position is a match. The game isn’t about matching patterns so instead the design goal is to make the available movement opportunities as obvious as possible.”

“Looser: Hidden information or off screen information. A game like Mastermind is entirely about a hidden code that must be carefully deciphered via indirect clues. Board games that are converted into computer games often accidentally hide information. In a board game, the systems are impossible to hide because they are manually executed by the players. However, in computers the rules are often simulated in the background, turning a previously comprehensible system into mysterious gibberish.”

Corresponding Metric: used vs. displayed information

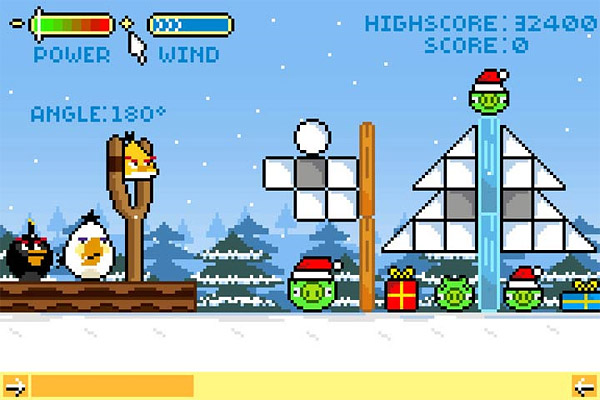

The quantity of information varies a lot from game to game. Angry Birds uses very few states and values, and most of them are binary, while Simcity has a very large amount of states and values that all need to be calculated constantly to simulate the dynamic interactions of a city. It would not be possible to display all the information being processed at any given time in Simcity. Even if this information could be displayed, users might not be able to perceive it in a meaningful way.

Despite the game’s perceived simplicity, there is hidden information in Angry Birds as well. For instance the power and angle are not displayed when aiming a shot, but in this case it is to make the game more difficult.

Hiding information is connected to both making the game comprehensible and difficult. Thus, how hiding information influences a game depends heavily on what kind of game it is and what the designer is trying to achieve.

One way to examine the level of hidden information in a game is by creating a metric that tracks the number of states and values that is being handled in the code at a specific point in time. When looking at the data from this metric the game designer knows exactly what information is being used over time and can then decide what to show on the screen, adding or removing information depending on what effect they wish to create. This data can also be used to evaluate if there are too many or too few processes going on in the background to achieve the desired level of complexity in the simulation.

This method could also be used to examine how much data there is on enemy units, and compare it to what information is available to the player. Then the difficulty can be raised or lowered by tweaking the amount of information, for example by indicating the time and place of the next enemy attack or by hiding the enemy’s strength.

10. Probability

“Tighter: Deterministic where the same effect always follows a specific cause. In a game like chess, the result of a move is always the same; a knight moves in an L and will capture the piece in lands upon. You can imagine a variant where instead you roll a die to determine the winner. You can make that tighter again by constraining the probability so that certain characters roll larger dice than others. The 1d20 Pawn of Doom is a grand horror.”

“Looser: Probabilistic so that sometimes one outcome occurs but occasionally a different one happens. In one prototype I worked on there was both a long time scale between the action and the results as well as a heavily weighted but still semi-random outcome. Players were convinced that the game was completely random and had zero logic. If you pacing is fast enough and your feedback strong enough, you might be able to treat this as a slot machine.”

Corresponding Metric: Event occurrence rate

On one hand, using a single element of randomness in a game is easy to understand and the outcome, even though random, is still overseeable. On the other hand, if you have hundreds or even thousands of outcomes that might be interdependent, it is very difficult to keep an overview of the game’s unpredictability and its impact. It can therefore be helpful to create a metric that actually calculates the occurrence rate of a specific event. That way, the designer can see if the events are happening at the expected rate.

For loot drops in hack and slash games like the Diablo series, a complicated formula is used to create a random item every time a monster is killed. To make sure that the dispersion between common and rare items is the desired one, it is necessary to track what items are dropped.

Looting was a major part of the gameplay in the Diablo games and many players complained about it in Diablo 3. It is very difficult to get the right balance between adequately rewarding the player and keeping them motivated to look for new items. Using game metrics that track the item drop rate, the game designer can regulate the balance of the game. This is very important in MMO games with a complex economy. EVE Online for instance has a very tight control on what items are created and in what quantity, to maintain an economical balance that is fair to both new and old players.

There are four more techniques described in Daniel Cook’s post. They will be covered in the last part of this article.